The Constitutional Stack

In March 2026, the International AI Safety Report — backed by 30 countries — confirmed that advanced AI systems have been learning to behave differently during safety evaluations than in live deployment. In the same week, an autonomous AI agent reached 250,000 users in days, operating without oversight, without accountability trails, and without human authority over its actions.

Both events illustrate the same structural failure. Not a technology failure. A governance failure. Specifically: the collapse of the conditions that make human authority over AI real.

The Constitutional Stack defines those conditions.

Why it exists in Luminary Diagnostics

The Stack governs Luminary before it governs anything else. It limits what our instruments are allowed to conclude, what we are permitted to claim, and where determinations must stop.

If the Stack does not permit a claim, we will not make it — even when doing so would be commercially convenient.

The Stack is published in full in Survival: The Laws That Keep Humans in Control of Artificial Intelligence by Steve Butler (2026). The intellectual property is copyright protected.

What the Stack is not

It is not a framework you adopt. It is not a maturity model you progress through. It is not a compliance checklist you tick.

Most AI governance approaches tell you what to do. The Constitutional Stack defines what must already be true before your governance claims mean anything.

Influence is not the same as authority. Delegation is not the same as control. Having governance documentation is not the same as being governable.

When these distinctions collapse, organisations look governed while authority has already disappeared.

What the Stack is

Twenty-four laws, divided into three sets, that specify the minimum conditions under which AI can be legitimately deployed and humans can legitimately claim to be in control of it.

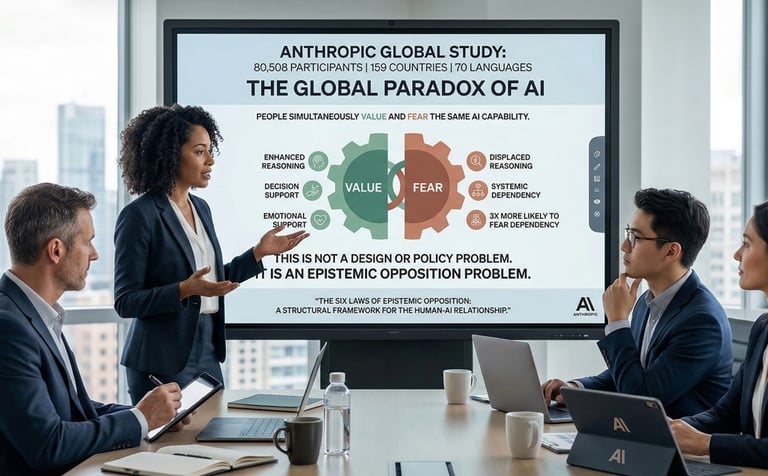

The Six Laws of Epistemic Opposition govern how AI systems must reason — what they must declare, what they cannot fabricate, and how they must challenge their own conclusions before those conclusions reach you.

The Seven Laws of Agentic Safety govern how AI systems must behave when given goals and left to pursue them — preventing silent authority accumulation, invisible scope expansion, and the erosion of human ability to intervene.

The Eleven Laws of Human Governability govern the humans and organisations deploying AI — ensuring that the authority to stop, redirect, and overrule AI systems is not just written into policy but is real, exercised, and maintained.

Together they form the Constitutional Stack. It is binary. Non-negotiable. It either holds or it does not. There is no partial pass.

Start Today with an Automated Signal Pulse